The Hidden Bottlenecks of Legacy Infrastructure

Artificial intelligence is not just another software application; it is a resource-hungry powerhouse. Many organizations attempt to run modern AI workloads on aging on-premise servers, only to hit an immediate brick wall. Legacy infrastructure simply was not designed to handle the massive, dynamic demands of machine learning models.

When businesses try to force-fit advanced AI into traditional environments, several critical limitations quickly emerge:

- Compute constraints: AI requires immense processing power, primarily from specialized GPUs. Legacy data centers usually rely on fixed, inflexible CPUs. Procuring and installing scalable GPUs on-premise is incredibly slow and physically restrictive.

- Severe data silos: AI models thrive on massive, interconnected datasets. Traditional architectures often trap valuable data across disconnected departmental servers and disparate storage arrays, making it nearly impossible to feed a unified stream of information to your AI engines.

- High latency: Real-time AI applications demand rapid data retrieval and processing. Aging on-premise networks frequently struggle with bandwidth limitations and slow disk storage, introducing unacceptable delays that degrade the user experience and render AI tools ineffective.

- Prohibitive capital expenditure (CapEx): Upgrading physical hardware for AI is a massive financial gamble. Purchasing top-tier GPUs, expanding cooling systems, and adding high-speed storage require huge upfront investments. This turns agile AI experimentation and rapid prototyping into a costly, high-risk endeavor.

Attempting to innovate within these outdated environments almost always leads to stalled projects and depleted budgets. To truly operationalize AI, organizations must eliminate these physical bottlenecks and move toward an architecture built for scale, flexibility, and speed.

How the Cloud Unlocks Generative and Agentic AI

Generative and agentic AI are fundamentally new ways of processing information, requiring infrastructure that traditional data centers simply cannot support. Attempting to run these advanced, resource-hungry models on-premises is incredibly inefficient. The cloud provides the deep technical synergy required to actually bring these transformative technologies to life.

Here is exactly how modern cloud architecture fuels the next generation of artificial intelligence:

- Elasticity for Burst Compute: Generative AI workloads are notoriously spiky. Training models, fine-tuning, or processing massive prompt requests requires immense computational power for short periods. Cloud environments offer on-demand elasticity, allowing organizations to instantly spin up powerful GPUs for burst compute and scale back down just as quickly to optimize costs.

- Access to Cutting-Edge Managed Services: The AI landscape evolves almost daily. Instead of constantly rebuilding infrastructure to keep up, cloud platforms provide instant access to enterprise-grade managed AI services. Tools like AWS Bedrock and Azure AI allow developers to seamlessly integrate top-tier foundational models into their applications via simple API calls.

- Agile Environments for Autonomous Agents: Agentic AI—where autonomous systems make decisions and execute multi-step workflows—relies on seamless integration across various systems. The cloud offers the perfect agile environment for this. Agents can rapidly interact with diverse microservices, serverless functions, and distributed data lakes to execute complex, real-world tasks without friction.

By migrating to the cloud, organizations do more than just upgrade their storage and servers. They build a dynamic, interconnected ecosystem where sophisticated generative models and autonomous AI agents can thrive, scale, and deliver immediate business value.

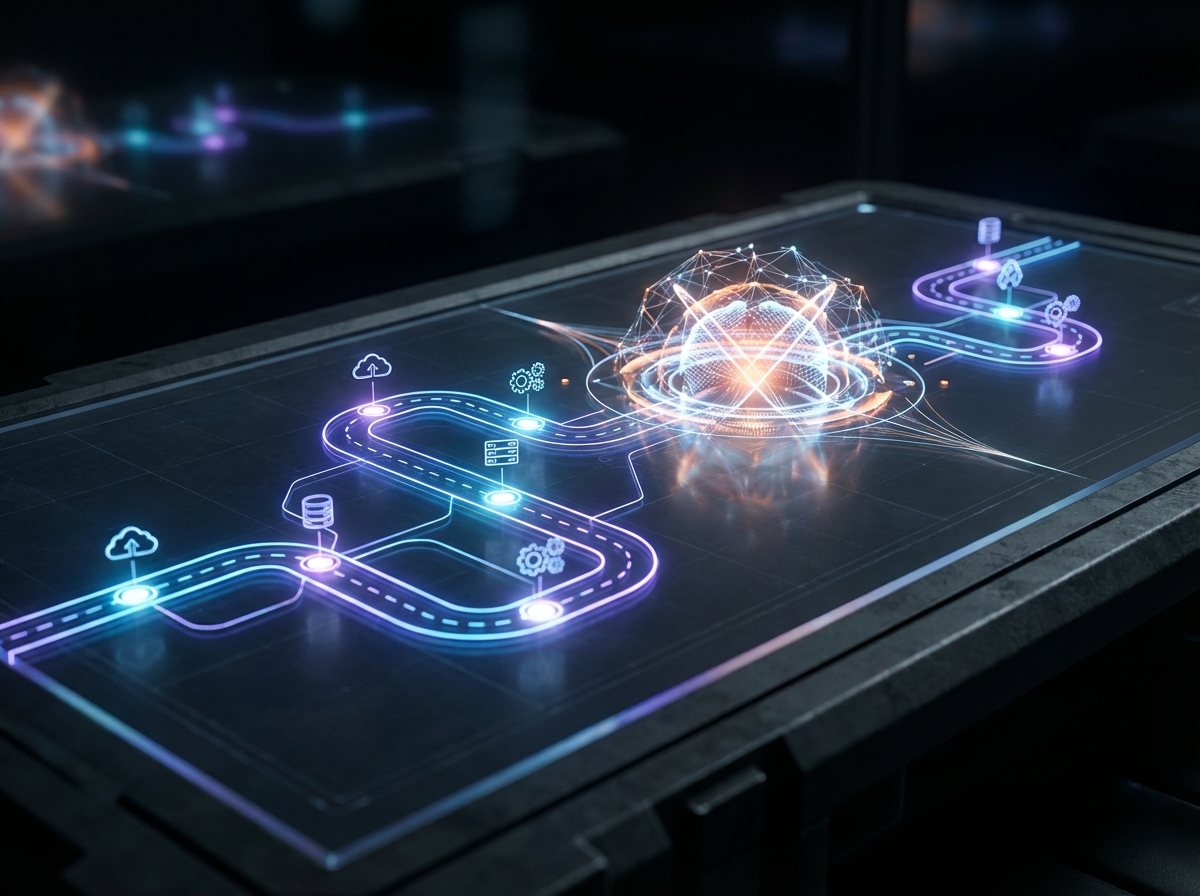

Building Your Cloud-to-AI Roadmap

A successful AI initiative doesn't happen by accident. It requires a deliberate, step-by-step roadmap that directly links your new cloud infrastructure to your long-term AI ambitions. For business and IT leaders, the transition from legacy systems to an AI-ready cloud environment involves four strategic steps:

- Assess current technical debt and data architecture: Before moving a single workload, evaluate where you currently stand. Take a hard look at existing technical debt and legacy systems. AI thrives on clean, accessible data, so untangling siloed or fragmented data architecture is a mandatory first step.

- Choose the right cloud model for AI scalability: Determine the best environment to support resource-heavy machine learning workloads. Consider whether a hybrid cloud or a multi-cloud strategy fits best. Hybrid models offer a great balance of sensitive on-premises data control and flexible cloud compute, while multi-cloud setups let you cherry-pick the best AI and analytics tools from different providers to maximize scalability.

- Prioritize migrating high-value data first: Avoid the trap of trying to move everything at once. Instead, adopt a phased approach by migrating your highest-value datasets first. Identify the specific data that will feed your initial AI pilots and prioritize its transfer. This strategy generates quick wins and demonstrates clear ROI without waiting for a massive organizational migration to finish.

- Establish cloud governance and AI-suited security: AI models consume massive volumes of information, which inherently amplifies your security and compliance risks. Lay down the rules early by establishing robust cloud governance. Implement strict access controls, data anonymization techniques, and continuous monitoring to ensure your future AI tools train on secure, compliant, and well-governed data.

By following these strategic steps, you ensure your migration goes beyond a simple lift-and-shift. Instead, you actively build a dynamic, scalable foundation perfectly tuned for artificial intelligence.

Breaking Down Data Silos: The Fuel for Future AI

There is a golden rule in artificial intelligence: your AI models are only as effective as the data they are trained on. In traditional on-premises environments, corporate data is notoriously fragmented. Customer records live in one system, financial metrics in another, and operational logs on a forgotten legacy server. These isolated data silos starve AI algorithms of the comprehensive context they need to generate valuable insights.

Cloud migration solves this problem by acting as a powerful forcing function for data readiness. The process of moving to the cloud naturally compels organizations to audit, clean, and standardize their digital assets. Instead of lifting and shifting bad data habits, modern migrations enable businesses to consolidate their fragmented information into unified cloud data lakes and enterprise data warehouses.

This centralization provides several critical advantages for AI readiness:

- Unification: Cloud platforms seamlessly integrate structured, semi-structured, and unstructured data into a single, highly scalable repository.

- Standardization: Native cloud data pipelines automatically clean and format incoming data, ensuring strict quality and consistency across the enterprise.

- Accessibility: Cloud environments feature robust APIs and governance frameworks, safely securely delivering data to the AI applications that need it.

Creating this unified data foundation is absolutely critical for modern AI architectures, particularly Retrieval-Augmented Generation (RAG). RAG frameworks allow AI models to pull real-time, proprietary information from your internal databases to answer complex enterprise questions. If your data remains locked in disjointed legacy silos, RAG simply cannot function securely or accurately.

By migrating to the cloud and dismantling these legacy barriers, you are doing more than modernizing your IT infrastructure. You are actively refining the high-octane data fuel that will power your organization's future AI capabilities.